Payment AI's MLOps Problem: Model Drift, Retraining Cadence, and Production Monitoring

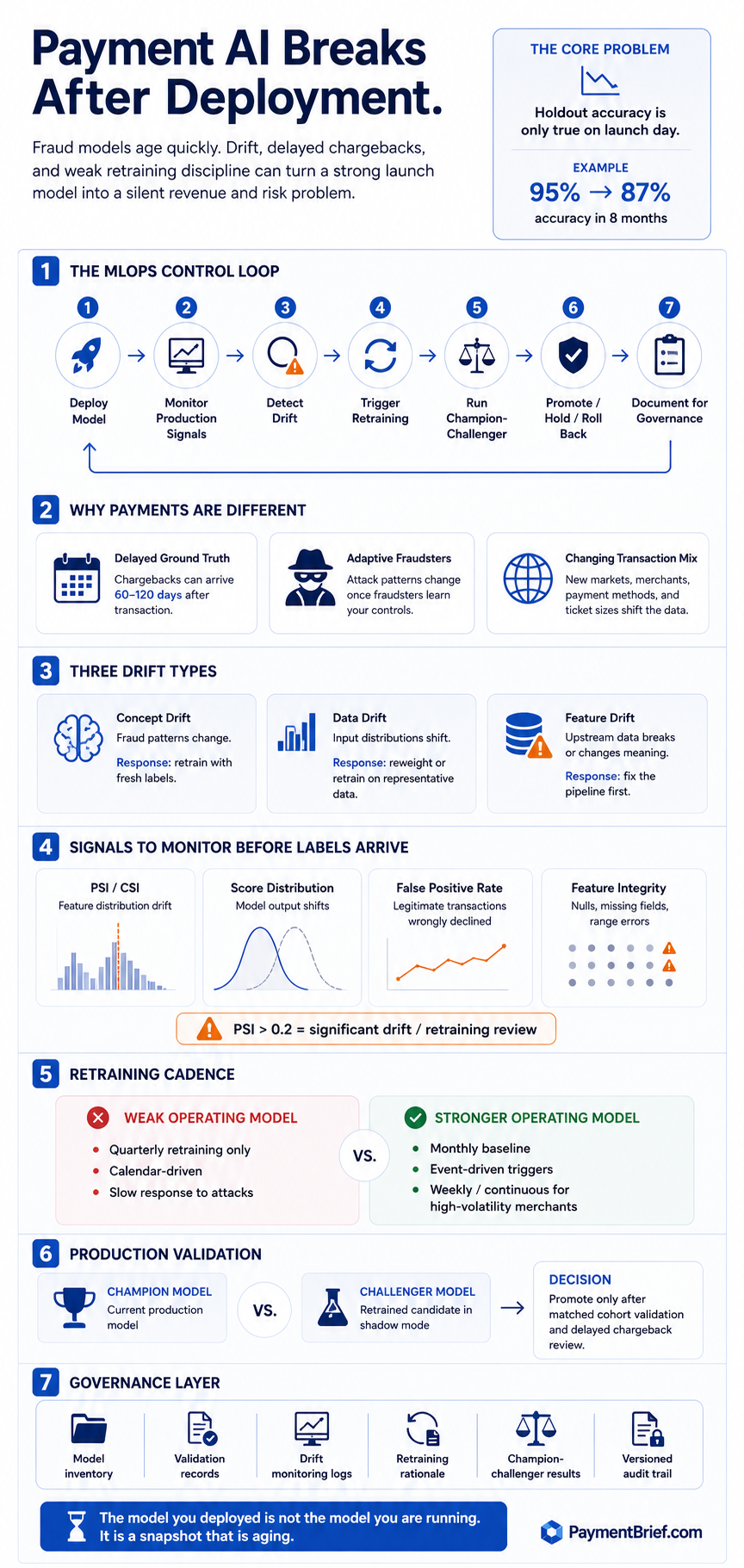

Most payment AI articles end at deployment. A bank's fraud model dropped from 95% to 87% accuracy in eight months. Chargebacks arrive 60–120 days late. Here is what production fraud ML actually requires to stay accurate.

Fraud model: 95%→87% accuracy in 8 months. Chargebacks arrive 60–120 days late — ground truth structurally delayed. PSI >0.2 triggers retraining. Monthly beats quarterly. NYDFS Oct 2024, PRA SS1/23 (May 2024) require formal model governance. WhyLabs shut down 2025.

Most published writing about AI in payments covers the deployment moment: a new fraud model is trained, evaluated on a holdout set, pushed to production, and declared a success. The metric that gets cited is accuracy on that holdout set — a number that was true the day the model was frozen and becomes less true every week after.

What happens at month eight is less often discussed.

A documented 2024 case: a bank deployed a credit risk model in January. By September, accuracy had dropped from 95% to 87%. McKinsey’s 2023 survey found 40% of companies deploying AI models experience noticeable performance degradation within the first year. For fraud specifically — where the adversary is actively adapting to your model, transaction patterns shift with merchant mix and geography, and ground truth arrives months late — the degradation timeline can be significantly shorter.

Fraud ML and rule engine hybrid architectures have well-developed literature on initial deployment. The production maintenance problem is the less-documented side. This article covers the three failure modes, the monitoring approach that works without labels, retraining triggers, governance obligations, and the tooling landscape as it stands in mid-2026.

The labeling lag: why fraud ML is different

In most ML domains, ground truth arrives quickly. A recommendation model can measure click-through rate in seconds. An image classifier’s labels exist before training. A sentiment model evaluates against human-labeled data.

Fraud ML’s ground truth is the chargeback. And chargebacks arrive on a structurally delayed timeline: Visa allows cardholders up to 120 days from the transaction date (or expected delivery date) to file a dispute; Mastercard’s window is similar. A transaction processed in January may not generate a confirmed fraud label until April or May — five months of production operation during which the model is making decisions with no feedback loop.

The practical consequence: a model that starts degrading in February due to a new fraud pattern won’t surface performance signals until the May chargeback cohort arrives. And by then, the fraudster has had four months of successful operations against your rules.

This is not a theoretical concern. Researchers at a major payment network published PRODEM at ECML PKDD 2025 — a framework specifically for proactive detection of fraud model degradation with delayed labels. Their approach: train a secondary “meta-model” that learns to predict when the deployed fraud model will make errors, using observable signals that arrive before ground truth. The meta-model fires an early warning before the label-dependent performance metrics catch up.

The operational implication: you cannot wait for accuracy metrics to tell you your model is degrading. You need proxy signals that move faster than chargebacks.

Three kinds of drift

Concept drift is the fraud team’s nightmare: the relationship between transaction features and actual fraud changes. Fraudsters learn your model’s decision boundary. New attack patterns — synthetic identity fraud, account takeover via SIM swap, coordinated carding operations against specific MCC categories — generate transactions that look like legitimate traffic by your model’s learned criteria. Your features are stable; the underlying pattern they need to capture is not.

Data drift is quieter but equally damaging: the distribution of input features shifts without the fraud-to-legitimate relationship necessarily changing. You expand into a new market, add a new payment method, or your merchant mix shifts toward higher-ticket digital goods. The transaction profile your model was trained on is no longer representative of what it is scoring. The model hasn’t learned incorrectly — it has simply aged out of relevance.

Feature drift is the operational failure mode: an upstream change breaks a feature before anyone notices. A PSP integration update changes how session data is structured. A new checkout flow removes a device fingerprint field. Your feature pipeline continues to run, produces values, and the model continues to score — but the values no longer mean what they did during training.

All three require different responses. Concept drift requires model retraining with fresh labeled data. Data drift may be addressed by retraining on a more representative data sample or by reweighting the training distribution. Feature drift requires a pipeline fix, not model retraining — and the monitoring system needs to catch it before blaming the model.

What you can actually measure without labels

The correct response to the labeling lag is not to wait for labels but to build monitoring that fires on observable signals.

PSI (Population Stability Index) is the dominant drift metric in payment and credit ML. It measures how much the distribution of an input feature has shifted between the training period and the current scoring period. The standard thresholds: PSI below 0.1 — distributions are similar, model stable; 0.1 to 0.2 — moderate drift, investigate; above 0.2 — significant drift, retraining warranted.

PSI is symmetric (unlike KL divergence, which is not), which makes it directionally interpretable for round-trip distribution comparison. In high-volume payment environments, practitioners run PSI checks every 15 to 30 minutes on the full feature vector for millions of transactions. The feature-level equivalent — CSI (Characteristic Stability Index) — tells you which specific input features are drifting, letting you distinguish data drift from feature pipeline failures.

Score distribution monitoring: track the distribution of the model’s output scores over rolling windows. A sudden shift toward more conservative scores (compressed toward 0) or more aggressive scores (shifted toward 1) is a signal that something has changed, independently of whether ground truth supports it. This fires immediately — no label lag.

False positive rate tracking: often the last metric operators wire up, but practically the most important. A rising false positive rate (legitimate transactions declined) is visible in real time through authorization rate data. It’s one of the most reliable early signals that a fraud model is mis-calibrated — and the metric your merchants will notice first.

NannyML (open-source and cloud) was built specifically for this problem: it estimates model performance without requiring ground truth, using Confidence-Based Performance Estimation (CBPE). For fraud teams with delayed labels, it is one of the few monitoring tools designed around the actual operating condition rather than assuming labels are always available.

Retraining cadence: why quarterly is wrong for fraud

The standard practice in many enterprises is quarterly retraining — map it to a calendar, run the cycle, deploy. For credit scoring, quarterly may be appropriate. For fraud, quarterly means a model trained in October is still in production in January with no updates — a three-month window across a holiday shopping season where fraud patterns are at their most active.

The practitioner consensus from fraud teams that have been in production 12-24 months: monthly retraining as a baseline, event-driven triggers as the floor. High-volatility environments — large merchants, marketplaces, digital goods platforms — warrant weekly cadences or continuous adaptive models.

The more important distinction is scheduled versus event-driven. Scheduled retraining ignores the signal; it fires the same week regardless of what the model is doing. Event-driven retraining uses drift thresholds as triggers: when PSI on key features exceeds 0.2, or when score distribution shifts beyond a configured standard deviation band, or when false positive rates rise above a rolling baseline threshold — retraining kicks off.

A financial services team documented the gap directly in 2025: a coordinated fraud attack exposed the inadequacy of monthly scheduled retraining. Moving to event-triggered continuous training closed the window from 30 days to hours.

FICO’s Adaptive Analytics — the engine behind Falcon Fraud Manager — takes this further: daily refreshes, continuous adaptation to new fraud patterns. The FICO Chief Analytics Officer argued that adaptive models reduce the criticality of batch retraining schedules — the model is always current, so the gap between “training data” and “today’s fraud” is measured in days, not quarters.

Champion-challenger in production

The standard production pattern is champion-challenger: your current production model is the champion, a retrained candidate is the challenger. The challenger runs on a fraction of traffic — receiving the same features, producing scores, but having its decisions overridden by the champion. You accumulate challenger outcomes and compare performance against the champion on the same traffic cohort before promoting.

This is structurally more complex for fraud than for conversion optimization, because you cannot show the same transaction to both models and compare outcomes in a true A/B sense — the transaction either gets declined or it doesn’t. The practical approach: route a percentage of incoming transactions to the challenger’s scoring pipeline while the champion makes the final decision; use the challenger’s score and the eventual ground truth (chargeback or no chargeback) to evaluate challenger performance on a matched cohort over the next 60-90 days before promoting.

Stripe’s 2025 Radar update used this pattern: a new multihead model ran in shadow mode on production traffic before the rollout that delivered greater than 30% fraud reduction on eligible transactions and a 17% year-over-year improvement in dispute rates, even as industry-wide e-commerce fraud grew 15%.

Revolut’s approach is further along: their PRAGMA foundation model (published April 2025) was trained on 24 billion banking events across 111 countries. Results: 65% fraud recall improvement and 130% credit scoring improvement versus prior models. Foundation model architecture means Revolut’s challenger evaluations are model family upgrades, not just weight refreshes.

Adyen’s January 2025 Uplift launch reported 86% reduction in manual fraud rules, with 35% of businesses eliminating manual rules entirely after AI optimization — an indicator that their champion-challenger cycles have been running long enough to generate confidence in the model’s coverage.

The governance layer

Production fraud ML is now a regulated activity in multiple jurisdictions. The compliance surface has expanded significantly in 2024-2025.

PRA SS1/23 (UK): Model Risk Management Principles published May 2023, effective May 17, 2024 for UK banks, building societies, and PRA-designated investment firms using internal models. Five principles covering model identification, risk-tiering, documentation, validation, and governance. First-year self-assessments are complete across the sector; multi-year remediation plans are now in execution. UK-regulated payment operators with internal fraud models are in scope.

NYDFS (US): The Department of Financial Services issued AI cybersecurity guidance on October 16, 2024. Under existing NYDFS cybersecurity regulations (23 NYCRR Part 500), AI risks must be incorporated into annual risk assessments. A comprehensive data inventory identifying all systems using AI was required by November 2025. Third-party AI use clauses must be included in vendor contracts. NYDFS’s approach uses existing regulatory frameworks rather than creating new AI-specific rules — but the compliance obligations are real and active.

EU AI Act: In force 2024. The EBA completed a mapping exercise in 2025 and concluded that existing banking and payments legislation is largely compatible with the AI Act, and that no new standalone EBA AI guidelines are immediately planned. The EU AI Act classifies fraud detection and credit scoring as high-risk AI applications — subject to conformity assessment, transparency, and human oversight requirements.

ECB Internal Models Guide (July 2025): Updated with a new machine learning section specifying supervisory expectations for ML techniques in regulatory capital models. Relevant for operators using ML in credit risk models that feed into capital calculations.

PCI DSS v4.0.1 and AI: The PCI Security Standards Council published its first AI guidance in March 2025: “Integrating Artificial Intelligence in PCI Assessments.” The framework’s position: 92% of QSAs now classify AI tools that interact with cardholder systems as in-scope for PCI assessments. Under Requirement 10.5, model version logs with timestamps must be retained. Inference metadata — model version, parameter changes, inference IDs, access events — must be logged with pseudonymized references. For full context on the current PCI DSS v4.0 obligations that already apply to your payment environment, the Year One audit findings piece covers the enforcement landscape.

The practical compliance checklist that fraud teams need to maintain:

- Model inventory: every production model documented, version-controlled, risk-tiered

- Validation records: independent validation before promotion, documented outcomes

- Drift monitoring logs: PSI and score distribution tracking, retained and auditable

- Retraining rationale: documentation of what triggered each retrain, what data was used

- Champion-challenger records: outcomes comparison before each promotion

The tooling landscape in mid-2026

The monitoring vendor landscape shifted materially in early 2025. WhyLabs discontinued commercial operations and was open-sourced under Apache 2.0 in January 2025. The codebase is available on GitHub but there is no commercial vendor behind it. Operators who had WhyLabs in their MLOps stack need to reassess.

Evidently AI remains the strongest open-source option — Python-native, broad drift metric library including PSI, KS, KL divergence, and Jensen-Shannon distance, strong visualization. Best suited for teams with internal MLOps infrastructure who want to build their own monitoring pipelines. Cloud tier available for teams without dedicated infra.

Arize AI has gained the fastest mindshare — 24% as of late 2025, up from 17.8% a year prior. Strong SHAP-based explainability, good for unstructured data, active development. Increasingly used in regulated financial services.

Fiddler AI is the enterprise-grade choice for regulated environments: real-time monitoring, drift detection, bias and fairness tooling, post-hoc explainability, SOC2 compliance. The feature set maps most directly to what PRA SS1/23 and NYDFS governance requirements are asking for in terms of audit trail and documentation.

NannyML is the specialist tool for the labeling lag problem specifically — its Confidence-Based Performance Estimation estimates model performance without ground truth. For fraud teams that cannot wait 60-120 days for chargeback labels, NannyML fills a gap that general-purpose monitoring tools don’t address. Open-source and cloud versions available.

The tooling decision is not just a technical one. PRA SS1/23 and NYDFS both require governance evidence that monitoring is in place and reviewed. The tooling you choose needs to produce audit-ready outputs, not just alerts that get cleared in a Slack channel.

The operator playbook

The operators who avoid the degradation problem treat production monitoring as part of the model, not an afterthought to deployment.

What to wire up before go-live: PSI monitoring on every input feature, score distribution tracking with rolling baseline, false positive rate tracking against authorization data, feature pipeline integrity checks (null rates, value range bounds). All of this fires without waiting for labels.

What to configure as triggers: PSI above 0.2 on any tier-1 feature → retraining queue. Score distribution shift beyond two standard deviations from 30-day baseline → investigation. False positive rate above rolling 90th percentile for five consecutive hours → escalation.

Weekly review cadence: Score distribution plots, PSI trend charts, authorization rate by risk tier. Monthly: challenger evaluation against champion on labeled cohort. Quarterly: full model audit documentation for compliance purposes.

The authorization optimization connection: Model drift in fraud ML has a direct authorization rate consequence. A conservative-drifting fraud model declines more legitimate transactions. You will see this in auth rate data before you see it in fraud metrics. Treat rising decline rates on low-risk segments as a fraud model monitoring signal, not just an acquiring problem.

For teams using multi-acquirer routing across PSPs: drift in your fraud model changes the routing calculus. If your model’s score distribution shifts, the routing rules calibrated against it need review too.

The hard lesson from operators 18-24 months into production fraud ML: the model you deployed is not the model you are running. It is a snapshot that is aging. The discipline of monitoring, triggering, and retraining on signal — rather than schedule — is what keeps the snapshot current.

Sources

A bank's fraud model dropped from 95% to 87% accuracy in 8 months (January–September 2024)

Checked:

40% of companies deploying AI models experienced noticeable performance degradation within the first year

Checked:

Visa and Mastercard cardholder dispute windows: up to 120 days from transaction date

Checked:

PRODEM paper (ECML PKDD 2025): meta-model framework for detecting fraud model degradation before labels arrive

Checked:

Revolut PRAGMA foundation model (April 2025): 24B banking events, 65% fraud recall lift, 130% credit scoring improvement

Checked:

Adyen Uplift (January 2025): 86% reduction in manual fraud rules, up to 6% conversion boost

Checked:

Stripe Radar (2025): 17% YoY dispute rate reduction; >30% fraud reduction with new multihead model

Checked:

NYDFS AI cybersecurity guidance (October 16, 2024): AI systems required in annual risk assessments and data inventory (deadline November 2025)

Checked:

PRA SS1/23 Model Risk Management Principles: effective May 17, 2024 for UK banks

Checked:

PCI SSC AI guidance (March 2025): 92% of QSAs classify AI tools as in-scope for PCI assessments

Checked:

Source types explained in our Methodology.