LLMs in AML Transaction Monitoring: What Operators Can Deploy Today

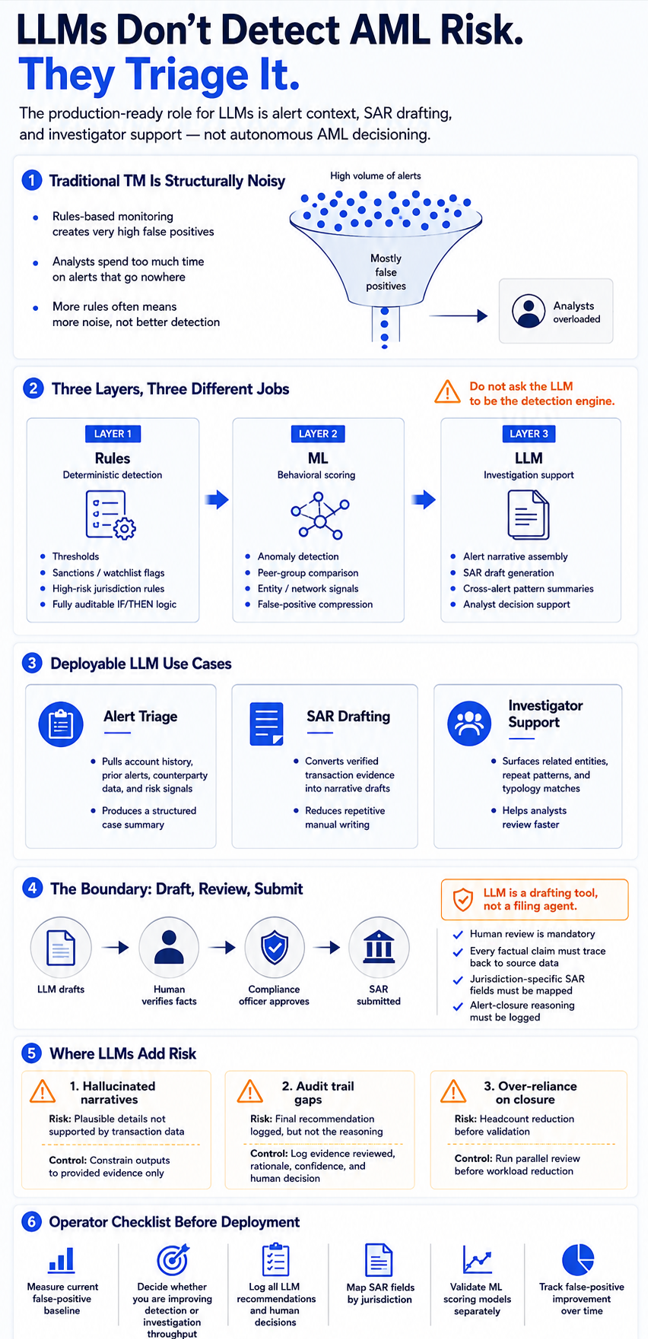

Traditional TM systems generate 90–95% false positives. LLMs are the third layer in a three-tier architecture — handling alert triage, SAR drafting, and investigator support. Here is how it works in production and what the regulatory constraints actually require.

Traditional TM: 90–95% false positive rate. LLMs are a third layer — alert triage, SAR drafting, investigator support. NICE X-Sight AI Narrate: 70% SAR time savings (Feb 2024). HSBC: 2–4x suspicious activity found, 60% fewer alerts. SR 26-2 (Apr 2026) supersedes SR 11-7.

Traditional AML transaction monitoring systems generate 90–95% false positive rates. Analysts spend 90% of their time investigating alerts that produce no action. Institutions processing high volumes were filing 10,000+ SARs per day by end of 2024. FinCEN civil penalties jumped 81% year-on-year in H1 2025, reaching $1.07B — regulators are no longer treating inadequate programs as process failures to be corrected through remediation plans.

LLMs are entering this space not as replacements for rules or ML models, but as a third layer in a three-tier architecture. The specific jobs they’re qualified for — alert narrative assembly, SAR draft generation, investigator decision support — are distinct from detection. Confusing the layers creates both operational and regulatory risk. This article covers what the three layers actually do, where LLMs fit specifically, the current vendor landscape, the regulatory constraints, the compliance boundary around SAR automation, and the failure modes operators need to manage.

Why the False Positive Rate Is Structurally Broken

The 90–95% false positive rate in traditional transaction monitoring isn’t primarily a calibration failure — it’s structural. Rules-based systems operate on static thresholds: IF transaction amount exceeds $10,000 AND account age is under 90 days AND jurisdiction is on a risk list, THEN alert. These thresholds are set conservatively because the cost of a missed suspicious transaction — a consent order, a fine, reputational damage — is far more visible to compliance leadership than the cost of thousands of analyst-hours spent on alerts that go nowhere.

The result is threshold creep. New rules get added after each regulatory examination. Old rules rarely get retired. Each individual addition is defensible in isolation; the cumulative effect is a system that flags nearly everything. Static thresholds also can’t adapt to behavioral context: a $9,500 cash deposit from a cash-intensive small business is operationally normal. The same deposit from a dormant account opened 60 days ago is not. Rules that fire on deposit size alone cannot distinguish between them without more signals — and adding more signals means more rules, more maintenance overhead, and more false positives from rule interactions.

This is the problem ML partially solved. Behavioral anomaly detection models, trained on account-level transaction history, learn what normal looks like per customer segment and flag genuine deviations. Best-in-class AI-augmented systems bring the false positive rate to 45–55%. The HSBC deployment reported 2–4x more genuinely suspicious activity identified alongside a 60% reduction in total alerts — a result that comes from the ML layer compressing noise, not from LLM assistance. Even at 55% false positive rate, analyst burden at scale is unsustainable without a triage layer. That is where LLMs enter.

Three Layers, Not One: Rules → ML → LLM

Three distinct tiers with distinct jobs. Confusing them — deploying LLMs at the detection layer, or expecting rules to do behavioral analysis — is where implementations fail. The rule engines vs ML architecture article covers hybrid design for fraud stacks; the same logic applies to AML.

Layer 1: Rules-based. Handles threshold-based detection, hard compliance requirements, and decisions that must be deterministic and immediately auditable. OFAC sanctions screening. Cash transaction reporting thresholds. High-risk jurisdiction flags. Rules provide a complete audit trail by definition — every fired rule is a logged IF/THEN statement. They can’t adapt without manual rewriting, and they generate the 90–95% false positive baseline when they’re the only layer.

Layer 2: ML-augmented. Handles behavioral anomaly scoring, customer segmentation, peer group analysis, and entity network graph detection. ML models learn what normal transaction behavior looks like across customer segments and flag statistical deviations. False positive rate compression — down to 45–55% at best-in-class — happens here. ML models require training data, periodic retraining as patterns shift, and independent validation under SR 26-2. This layer scores alerts; it doesn’t narrate them.

Layer 3: LLM-assisted triage. Handles the analyst-facing work: assembling relevant context from transaction records, account history, entity data, and prior alerts, and producing structured narrative that either supports or undermines the flagging decision. LLMs also draft SAR narratives from assembled evidence. This layer operates at the investigation stage, after detection. It doesn’t decide what’s suspicious — it helps analysts process what the upstream layers have identified. AI agents at this layer produce up to 87% reduction in manual monitoring efforts, roughly 115 minutes saved per analyst per day, with complex cases investigated 90% faster on average.

LLM’s Specific Jobs in the Stack

Three concrete tasks where LLMs add operational value today.

Alert narrative assembly. A flagged alert from an ML model arrives with a risk score and a transaction record. Without context assembly, an analyst manually pulls account history, checks prior alerts, reviews counterparty data, looks up entity relationships, and decides whether to escalate — each alert requiring 20–40 minutes of investigative work. An LLM agent given access to the relevant data sources assembles this context automatically and produces a structured summary in seconds: why the alert was scored, what the account history shows, what prior alerts exist, what entity connections are visible. This is the mechanism behind the 87% manual effort reduction.

SAR draft generation. Suspicious Activity Report narratives require specific structured content — description of the activity, accounts and entities involved, transaction details, basis for the filing decision. Writing these accurately from raw transaction data is time-consuming, formulaic work. NICE Actimize’s X-Sight AI Narrate, launched February 2024, synthesizes transaction data into SAR narratives and delivers 70% time savings in SAR filing and 50% reduction in investigation time. It also identifies 30–50% more suspicious transactions by surfacing patterns that manual review misses. Flagright takes a privacy-first approach with a proprietary LLM for narration that doesn’t route data to third-party model providers. ComplyAdvantage Mesh (October 2025) includes natural language rule-building — compliance teams construct monitoring logic in plain English without engineering support, a different LLM application but significant for rule maintenance overhead.

Investigator decision support. Beyond individual alert triage, LLMs can surface cross-alert patterns: accounts appearing across multiple flagged transactions, entity relationships spanning geographies, typology matches against known laundering patterns. FinCEN figures show institutions using AI-based AML tools filed 15% more accurate SARs in 2024 — quality improves alongside volume efficiency.

The Vendor Landscape

NICE Actimize is the enterprise benchmark. X-Sight AI Narrate (February 2024) is the specific LLM product — GenAI synthesizing transaction data into SAR narratives with 70% filing time savings. Chartis 2024 Category Leader, Datos Insights 2024 AML Impact Award. For large institutions already on Actimize, this is the lowest-friction LLM deployment path.

Quantexa combines entity resolution, knowledge graph analysis, and AI through Q Assist (June 2024). The entity resolution layer — connecting transactions across disparate data sources — is the differentiation. Reported metrics: 90% more accuracy, 60x faster analytical model resolution. Chartis Category Leader in both 2024 and 2025. Better suited for institutions with complex entity relationship problems than for volume SAR drafting.

Sardine is an agentic platform unifying fraud, AML, and real-time transaction monitoring with 500+ pre-built rules. $70M Series C February 2025, 130% YoY ARR growth. The December 2025 partnership with Helix Q2 targets sponsor bank TM, BSA, and AML programs. Strong on real-time payments fraud; the unified fraud-plus-AML architecture reduces integration overhead of running separate vendor stacks.

ComplyAdvantage Mesh (October 2025) — AI-native platform unifying screening, risk scoring, transaction monitoring, and real-time payments analysis. Natural language rule-building is operationally significant for compliance teams who currently wait for development sprints to modify monitoring logic. Claims 70% false positive reduction. Their 2025 survey: 93% of firms using AI for AML screening.

Temenos FCM AI Agent (2024) claims sub-2% false positive rates versus the 5–8% industry average for conventional systems. Available on-premises or cloud, relevant for institutions with strict data residency requirements. The claim is notable if it holds in independent validation.

Featurespace ARIC Risk Hub uses proprietary Adaptive Behavioral Analytics, Cambridge-developed and patented, deployed across 180+ countries. The adaptive model updates per-customer in near-real-time rather than on batch retraining cycles. 2025 Datos Insights Silver; Card and Payments Awards double win 2025. Stronger on Layer 2 behavioral anomaly detection than on LLM narrative generation.

Regulatory Landscape: What You Must Be Able to Explain

Every major jurisdiction permits AI in AML programs. Every major jurisdiction requires explainability of the decisions those AI systems make — including alert-closure decisions, not just SAR filings.

FinCEN (June 2024) explicitly encourages ML and AI for reducing false positives and provides a safe harbor for responsible innovation. The April 2026 NPRM moves toward an effectiveness-based, risk-driven AML/CFT framework. The implication: a program generating 95% false positives is not an effective program, regardless of rule volume. Comment period closes June 9, 2026; 12-month implementation target.

SR 26-2 (Federal Reserve/FDIC/OCC, April 17, 2026) supersedes SR 11-7. Independent validation, governance, and audit trail requirements are preserved. GenAI is explicitly outside SR 26-2’s scope — dedicated guidance is signaled but not yet issued. Traditional ML models for alert scoring are fully in scope and require formal model risk management: training data documentation, validation methodology, performance monitoring, and a model inventory. Operators deploying ML for alert scoring without SR 26-2-aligned validation programs are carrying unexamined regulatory exposure.

EU AI Act (entered force August 2024) creates a transparency obligation directly applicable to AML AI: financial institutions must be able to clarify why transactions were flagged as suspicious. A gradient boosting score alone is not sufficient — feature importance outputs that explain what drove the score are required. For LLM-assisted triage, the reasoning process must be logged.

MAS AI Guidelines (May 2025) are the most detailed single-jurisdiction AI risk management standard for financial services. Following a mid-2024 thematic review of AI and GenAI model risk, MAS published standards covering model governance, data quality, bias assessment, and human oversight. The April 2024 COSMIC platform — enabling collaborative suspicious activity pattern sharing between institutions — is separately relevant for AML.

FATF (June 2024 Singapore plenary): AI and ML enhance monitoring capabilities, but explainability and transparency are critical. Data used by AI systems must be scrutinizable and verifiable by regulators and auditors. This is an exam question during supervisory inspections, not an aspiration.

The practical implication: you need audit trails for closed alerts. If your LLM triage layer closes 10,000 alerts per day as false positives, the basis for those decisions must be logged — the data reviewed, the reasoning chain, the confidence level, and the human reviewer’s final call.

SAR Automation: The Compliance Boundary

LLM-generated SAR narratives are operationally real. NICE Actimize, Flagright, and AWS have published guidance on GenAI SAR generation. No regulator has prohibited LLM-generated SAR content. FinCEN 2024 figures show institutions using AI-based AML tools filed 15% more accurate SARs — the quality concern runs both ways.

The compliance boundary: LLM drafts, human reviews, human submits. A compliance officer must review every narrative before submission, confirm that facts stated are accurate against underlying transaction data, verify that jurisdiction-specific fields are complete, and sign off on the filing decision. The LLM is a drafting tool, not a filing agent.

The jurisdiction-specific field problem is not trivial. SAR formats differ meaningfully between FinCEN (US), the NCA (UK), and AUSTRAC (Australia). A generic LLM trained on generic AML content may generate fluent narratives that miss required fields or apply wrong typology codes for a specific jurisdiction. Before deploying LLM narrative generation, build a field-mapping document against every jurisdiction you file in.

FinCEN’s December 2024 deepfake guidance (FIN-2024-DEEPFAKEFRAUD) adds a notation requirement for SARs where AI-facilitated fraud is involved. Institutions using AI for alert triage need SAR workflows that can apply this flag accurately — which requires human review to identify and apply correctly.

Where LLMs Add Risk

Three failure modes to manage actively.

Hallucinated SAR narratives. LLMs generate plausible text. When source transaction data is ambiguous or incomplete, a model can produce narratives containing details not present in the underlying record — fabricated amounts, invented counterparty descriptions, interpolated dates. A reviewer reading a well-structured narrative quickly may not catch fabrications. The defense is mandatory fact-checking against source data, not just narrative review for tone and completeness. Know whether your vendor constrains the LLM to only reference explicitly provided context, or whether it can draw on parametric knowledge.

Audit trail gaps for alert-closed decisions. If your LLM triage layer recommends closing alerts and your logging only captures the final recommendation rather than the reasoning chain and evidence reviewed, you cannot produce a defensible audit trail under FATF, the EU AI Act, or SR 26-2. The decision to not file a SAR is a regulated activity subject to examination. Logging the output without logging the basis is not sufficient.

Over-reliance on LLM closure without validation. The 87% manual effort reduction is achievable only if the model’s closure recommendations are well-calibrated. Operators who reduce human reviewer headcount before validating model accuracy are creating a program gap, not an efficiency. The correct sequence: run LLM triage in parallel with human review for a validation period, measure alignment, identify unreliable categories, then adjust workload allocation. This mirrors the model validation requirements in SR 26-2 applied operationally.

Operational Recommendations

1. Measure your current false positive rate. Before adding any AI layer, calculate the baseline. Pull three months of alert data, categorize closed alerts by closure reason, and compute the ratio. You cannot evaluate vendor claims or measure improvement without this number.

2. Identify which layer you’re adding AI to. Detection improvement (Layer 2, ML behavioral scoring) requires different vendor evaluation criteria, integration architecture, and validation work than investigation throughput improvement (Layer 3, LLM triage). Vendors often blur these distinctions. The AI fraud detection article covers the detection layer in detail; this article covers Layer 3.

3. Log everything, including alert-closure reasoning. Every LLM triage recommendation, the evidence it was based on, the confidence level, and the human reviewer’s final decision must be logged with enough detail to reconstruct the decision path. Design the logging architecture before deploying the LLM layer. Retrofitting audit infrastructure after a regulator asks for it is substantially more expensive.

4. Map jurisdiction-specific SAR requirements before deploying LLM narration. Build a field-mapping document for every jurisdiction you file in. Validate LLM output covers every required field and handles the FIN-2024-DEEPFAKEFRAUD notation requirement. Run a parallel period — LLM drafts reviewed alongside manually written SARs — before switching to LLM-primary narration.

5. Run SR 26-2-aligned model validation for ML layers. If you’re deploying or operating ML models for alert scoring, your model risk management program needs formal coverage: documented training data provenance, validation methodology, performance monitoring thresholds, and a model inventory. For Federal Reserve, FDIC, or OCC-supervised institutions, this is not optional regardless of whether the model is vendor-supplied or internally built.

6. Track false positive rate improvement over time. FinCEN’s April 2026 NPRM signals movement toward effectiveness-based evaluation. A program that moves from 95% to 60% false positive rate over 18 months with documented AI augmentation is a program that tells a credible story in examination. A program that spent $3M on AI vendors and cannot show improvement in the underlying metrics tells a different story. Tie every vendor engagement to a measurable improvement target before signing.

Sources

Traditional AML transaction monitoring systems generate 90–95% false positive rates (Gartner 2025)

Checked:

FinCEN June 2024 proposed rule explicitly encourages ML/AI for AML and provides safe harbor for responsible AI innovation

Checked:

NICE Actimize X-Sight AI Narrate launched February 2024 — 70% SAR filing time savings, 50% investigation time reduction, 30–50% more suspicious transaction detection

Checked:

SR 26-2 issued April 17, 2026 by Federal Reserve, FDIC, OCC — supersedes SR 11-7; GenAI explicitly outside scope

Checked:

EU AI Act (Regulation 2024/1689) entered force August 2024 — requires transparency in AI decision-making including AML flagging

Checked:

MAS AI Guidelines published May 2025 following mid-2024 thematic review of AI/GenAI model risk management practices

Checked:

Sardine raised $70M Series C (February 2025) with 130% YoY ARR growth in 2024

Checked:

FinCEN civil penalties increased 81% YoY in H1 2025 — $1.07B vs $590M in H1 2024

Checked:

Source types explained in our Methodology.